Cao and Sengupta Develop Artificial Intelligence for Radar Cameras

ECE assistant professor Siyang Cao and electrical engineering graduate student Arindam Sengupta are working to improve radar imaging with machine learning. Their new approach, discussed in IEEE Robotics and Automation Letters, automatically generates datasets containing labeled radar data-camera images.

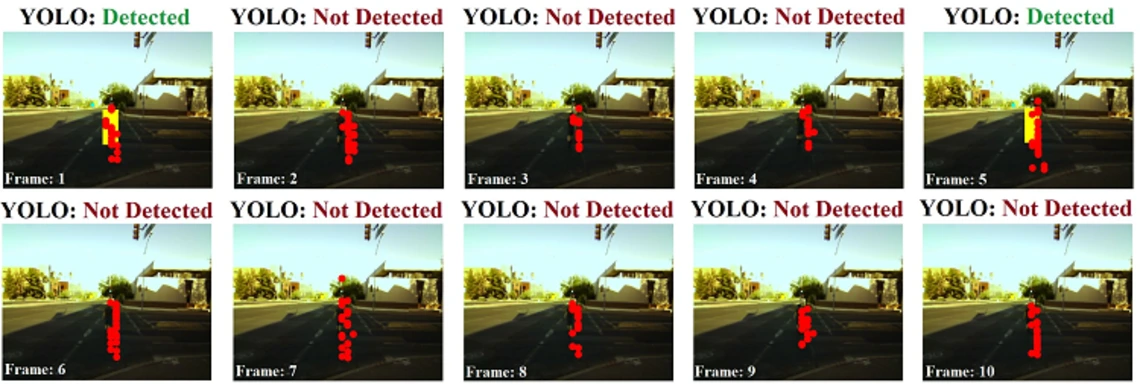

They are using a highly accurate object detection algorithm on the camera image-stream (called YOLO) and an association technique to label radar point-cloud.

"Deep-learning applications using radar require a lot of labeled training data, and labeling radar data is non-trivial, an extremely time and labor-intensive process, mostly carried out by manually comparing it with a parallelly obtained image data-stream," primary researcher Sengupta told TechXplore. "Our idea here was that if the camera and radar are looking at the same object, then instead of looking at images manually, we can leverage an image-based object detection framework (YOLO in our case) to automatically label the radar data."

The method could help automate the generation of radar-camera and radar-only datasets, greatly improving the imaging and navigation capabilities of transportation.

"Our lab at the University of Arizona conducts research on data-driven mmWave radar research targeting autonomous, healthcare, defense and transportation domains," Cao said. "Some of our ongoing research include investigating robust sensor-fusion based tracking schemes and further improving stand-alone mmWave radar perception using classical signal processing and deep learning."

Cao and Sengupta's work was also featured in Market Tech Post.